Call for Papers

Background

Modern AI-based compression works by transforming an image into a condensed, digital representation. To save space, this representation must be compressed losslessly—a process known as entropy coding, which works best when it knows which values are likely to occur. The fundamental challenge is that this likelihood changes from one image region to another. A flat sky has predictable data, while a detailed texture is highly random. Early AI models used a single, average model to predict these probabilities, which was inefficient.

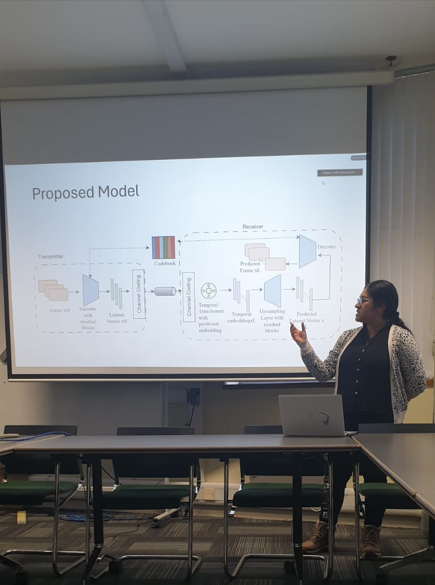

The hyperprior architecture solved this by introducing a clever two-part system. The primary network analyzes the image to create the main compressed representation. A second, auxiliary network then analyzes that representation to estimate its own complexity and variation. This “hyperprior” information serves as a compact data-driven model that dynamically guides the compression process—an application deeply rooted in data science principles such as probabilistic modeling, information theory, and data distribution analysis.

In essence, the hyperprior provides a dynamic, context-aware guide for the entropy coder. This allows the model to use fewer bits for predictable areas and more bits for complex ones, dramatically improving compression efficiency without sacrificing quality and establishing a new standard in neural codec design.

Goal/Rationale

The rapid evolution of machine learning and data science is fundamentally reshaping image and video compression, moving beyond traditional standards. Techniques like hyperprior entropy coding are at the forefront, demonstrating a paradigm shift towards AI-native codecs that leverage data-driven statistical inference and predictive modeling for superior efficiency and scalability.

However, a significant gap exists between cutting-edge research and its practical implementation within industry and product development teams. The primary goal of this workshop is to bridge this gap by translating the data science foundations—such as probabilistic estimation, data distribution modeling, and adaptive learning—into actionable understanding for engineers and researchers.

We aim to provide a comprehensive, accessible foundation in neural compression, with a dedicated focus on the principles and power of hyperprior architectures. This session is designed not just to explain the “what,” but the “why,” demystifying how these models dynamically adapt to data patterns to create highly efficient, context-aware representations.

By equipping participants with this data science–oriented perspective, the workshop empowers them to innovate across applications ranging from streaming and virtual reality to on-device AI and next-generation storage systems—where intelligent data handling is key to performance and scalability.

Scope and Information for Participants

This workshop provides a clear, practical journey from foundational concepts to real-world application. We begin by building intuition for entropy coding as a data science problem—understanding probability estimation, data distributions, and model adaptability in neural networks. The core of the session will be a detailed, non-mathematical exploration of the hyperprior architecture, illustrating how it models uncertainty and variance to achieve state-of-the-art compression.

The scope will extend beyond theory to practical considerations, including model variants, computational trade-offs, and integration into full compression pipelines. Participants will gain insights into data-driven model training, performance evaluation, and benchmarking against conventional codecs.

This session is ideal for data scientists, machine learning engineers, software developers in multimedia, and technical product managers. A basic understanding of deep learning is beneficial, but no prior expertise in compression is required. You will leave with data science–based insights into how hyperprior models work and how to apply them in your own projects.